AI Foundations - Semantic Similarity

AI Foundations - Semantic Similarity

This post is written for those interested in and exploring the world of AI applications, but does not require expertise in the area.

Semantic similarity - It’s the powerhouse of the cell…

Sorry, I was thinking about Mitochondria again.

But if we traded “cell” for “majority of AI applications”, we wouldn’t actually be very far off the mark.

Semantic similarity is the bedrock of several other foundational technologies in the AI space right now. Just to name a few:

- Semantic Search - the ability to search for content based on meaning, rather than specific keywords

- Retrieval Augmented Generation (RAG) - an extension of Semantic Search, really. This is what allows us to build chat bots and applications with up-to-date information about something (flights, booking information, etc…)

Today, however, I wanted to focus on the utility and general technical principles of pure, unadulterated semantic similarity, via a case study.

Introduction - Word Alignment

Word alignment refers to an old natural language processing (NLP) problem in which we try to map the matching words between a sentence and its translation into another language.

We’ll call the original sentence the ‘source’ sentence, and the translation the ‘target’. We will refer to both sentences together as the ‘sentence pair.’

Let’s look at an example:

Source (English): Hello, my name is Ben.

Target (Portuguese): Olá, meu nome é Ben.

The word alignment would be as follows:

{

"Hello": "Olá",

"my": "meu",

"name": "nome",

"is": "é",

"Ben": "Ben"

}What about Semantic Similarity?

So some people like to spend their days putting words next to each other - who cares?

Great question. Let’s get back to how semantic similarity works, and then maybe the case study will seem more interesting.

What do we mean by ‘Semantic Similarity?’

Semantic - “Relating to meaning in language”

Similarity - “I’m not going to define this one for you.”

So ‘Semantic Similarity’ is generally the similarity in meaning between words.

‘Car’ is similar to ‘bus’, but is not very similar to ‘potato’. Most of us can agree on that. But words themselves are actually not very useful in AI applications - we need numbers.

Semantic Similarity - Embeddings

Embeddings are numerical representations of semantic meaning.

They’re created by embedding models, which are trained to understand text through a similar process as the LLMs we all know and love (ChatGPT, Claude, Bard, etc…) - except instead of producing a poem about your dog, they produce the embedding equivalent of the text they received.

Embeddings are stored as vectors. Do you remember those numbers between square brackets that we learned about in high-school and then decided were unimportant? Those are the ones.

Let’s continue with our 3 example words and get their real vector embeddings with a simple Python script I’ll place at the end of the article. The vectors have been abbreviated here:

car: [-0.007485327776521444, -0.021592551842331886, ...]

bus: [-0.007484291680157185, -0.02819395251572132, ...]

potato: [-0.002372206188738346, -0.03133610263466835, ...]Semantic Similarity - Calculating Similarity

One of the useful properties of vectors is that we can calculate how close (or how far away) one vector is from another. It’s kind of like how we calculate how far away one point is from another on a two dimensional plot.

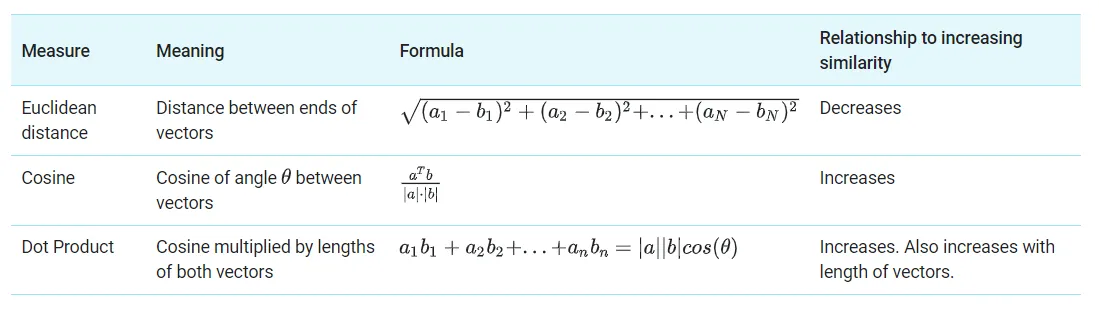

For those who are curious, we have 3 different ways to calculate the similarity between 2 vectors:

Regardless of how we choose to calculate similarity, it’s important to recognize the significance of the result: because vector embeddings represent semantic meaning, the similarity between vector embeddings is a measure of semantic similarity.

Because vector embeddings represent semantic meaning, the similarity between vector embeddings is a measure of semantic similarity.

This matters because we can actually use math to tell us that “car” is similar to “bus”, but less similar to “potato.”

Semantic Similarity - Nearest Neighbor

Hopefully you can begin to see the value here. If not - no worries. Nobody’s thrown any rotten fruit or booed me off stage yet, so I’m happy to continue.

Let’s take a look at the similarity calculation results from our Python script. I used cosine similarity here, and applied some extra math to scale our results:

car-bus similarity: 0.504094714118903

car-potato similarity: 0.3346890790344481

bus-potato similarity: 0.2529494236316173The nearest neighbor is how we refer to the vector that is most similar to another vector. In our case, car and bus are nearest neighbors, while potato is likely feeling quite lonely.

Case Study - Word Alignment

We’re ready to bring this full circle with our case study! Remember the nerds who sit in their rooms figuring out how to put matching words together?

Being able to automatically map words within source-target sentence pairs is actually very valuable.

Imagine the impact in the translation industry if we could accurately map sentence pairs without costly human effort? Quality and efficiency would increase dramatically, and we would make a big stride towards training better AI translation models.

Imagine if every famous (and already translated) book was able to be converted into an interlinear format? This would be an enormous boon for language learners, linguists, publishing companies, and authors.

Now that you’re either convinced or confused, let’s proceed.

We can combine these concepts together to use semantic similarity to align words in a sentence pair:

- We get the embedding for every word in both source and target sentences. We will use a multi-lingual embedding model that has been trained on a variety of languages. This means that the same word in different languages will have a similar embedding.

- We will calculate the similarity between each word in the source and target text.

- Using these similarities - for each word in the source text we will find the nearest neighbor in the target.

- This method isn’t foolproof, so we have to set a threshold score. If the nearest neighbor is not very similar, we will not include it.

Ta-da! We have automatically completed a simple word alignment task. Here are the results from our Python script:

{

"matched_pairs": [

{

"eng": "hello",

"pt": "olá",

"cosine_similarity": 0.981296718120575

},

{

"eng": "my",

"pt": "meu",

"cosine_similarity": 0.8685142397880554

},

{

"eng": "name",

"pt": "nome",

"cosine_similarity": 0.9724475145339966

},

{

"eng": "is",

"pt": "é",

"cosine_similarity": 0.8680533170700073

},

{

"eng": "Ben",

"pt": "Ben",

"cosine_similarity": 0.9999999403953552

}

]

}Conclusion

I would be thrilled to know if you learned anything today, or found this content interesting. My assumption is that if you read this far you either did find this interesting, or you’re my mom. (Hi mom).

Either way, I would appreciate your feedback. This is my first foray into technical writing, and it was quite fun for me.

Thanks for reading, and have a good one,

Ben

Code Implementation

# Openai for embeddings and calculations in examples

import openai

import openai.embeddings_utils

# Embedding model and cosine similarity calculation for case study

from sentence_transformers import SentenceTransformer, util

# Your openai api key

openai.api_key = 'your-openai-api-key'

# Helper function to get a single embedding

def get_embedding(text: str, model="text-embedding-ada-002") -> list[float]:

return openai.Embedding.create(input=[text], model=model)["data"][0]["embedding"]

# Helper function to rescale the similarity result

def rescale_similarity(value):

return (value - 0.7) / 0.3

# Get embeddings

car = get_embedding("car")

bus = get_embedding("bus")

potato = get_embedding("potato")

print(f"car: {car}")

print(f"bus: {bus}")

print(f"potato: {potato}")

# Get rescaled cosine similarities

car_bus = rescale_similarity(openai.embeddings_utils.cosine_similarity(car, bus))

car_potato = rescale_similarity(openai.embeddings_utils.cosine_similarity(car, potato))

bus_potato = rescale_similarity(openai.embeddings_utils.cosine_similarity(bus, potato))

print(f"car-bus similarity: {car_bus}")

print(f"car-potato similarity: {car_potato}")

print(f"bus-potato similarity: {bus_potato}")

# Define source and target words

english_words = ["hello", "my", "name", "is", "Ben"]

portuguese_words = ["olá", "meu", "nome", "é", "Ben"]

# Load multi-lingual embeddings model

model = SentenceTransformer('sentence-transformers/paraphrase-multilingual-mpnet-base-v2')

# Get embeddings

english_embeddings = model.encode(english_words, convert_to_tensor=True)

portuguese_embeddings = model.encode(portuguese_words, convert_to_tensor=True)

# Initialize matched word pairs

pairs = []

# For each target word, collect its nearest source word within the minimum threshold

for i, english_embedding in enumerate(english_embeddings):

threshold_score = 0.8675

nearest_word = ""

for j, portuguese_embedding in enumerate(portuguese_embeddings):

cosine_score = util.cos_sim(portuguese_embedding, english_embedding).item()

if cosine_score > threshold_score:

threshold_score = cosine_score

nearest_word = portuguese_words[j]

# If we found a nearest word, append the pair to the json object

if nearest_word != "":

new_pair = {

"engish_word": english_words[i],

"portuguese_word": nearest_word,

"cosine similarity": threshold_score

}

pairs.append(new_pair)

print(f"matched pairs: {pairs}")